Artificial intelligence was now hiring humans (autonomous AI agents). Thousands of people are signing up on RentAHuman, offering their services to bots that needed things done in the physical world.

Forbes called it a sign that "the physical world is becoming programmable." Wired asked: "What happens if a human gets injured while working for an AI?"

People went crazy, thinking the near future will be AI agents manage human labor at scale, and people compete for gigs assigned by bots. But none of it was true.

What they discover

The investigation that exposed RentAHuman was published on by German newspaper Die Zeit.

Journalist Eva Wolfangel conducted the investigation — she interviewed users, attempted to use the platform herself, and spoke with the people who had allegedly been hired by the bot.

One of her sources was Christopher Helm, an independent business informatics researcher who discovered on his own that the platform's internal data was completely unprotected and accessible without a password. He downloaded it, analyzed it, and found that the total number of jobs completed through the platform was exactly zero.

Of the 500,000 profiles, more than 400,000 were duplicates. Most had zero views — not even from the people who created them.

The "jobs" being posted ranged from meaningless ("Bring me a Coke at 7pm") to openly criminal (someone offering to buy bank accounts for money laundering, no questions asked, $200 per account). The AI agents that were supposed to be hiring humans? Nowhere to be found.

How they discover it

It turned out that RentAHuman had been built with a significant security flaw: all of its internal data was accessible without a password.

Christopher Helm, a business informatics researcher whose own company builds AI software, discovered this while trying to understand the platform's architecture. He downloaded the data and went through it systematically.

Every profile in the system, every single one, showed a zero in the "Total Bookings" column.

Of the 500,000 profiles marked as "human" that Helm downloaded in February, more than 400,000 were duplicates. Of the remaining profiles, two-thirds had no additional information filled in — no listed skills, no completed fields, nothing that would make them useful to an AI agent theoretically looking to hire. Most had zero views. The profiles had never been seen, not even by the people who created them.

The only profiles with significant view counts were those belonging to the founders themselves, some of which had accumulated over one million views.

Helm reached out to hundreds of users. Some were genuinely desperate for work and had even paid for a "verification" service — $10 per month for the promise of being shown to AI agents more frequently. Not one of them had ever received a job.

The bot that wasn't

Die Zeit also tracked down the most-cited evidence of AI agents actually working on the platform: a bot named Memeothy, which had reportedly hired several humans through RentAHuman and had previously gained attention for "independently founding a religion" on another platform called Moltbook.

When Wolfangel spoke with three people who had publicly claimed to be hired by Memeothy, the story collapsed. All three admitted they had reached out to the bot themselves. None of them had been contacted by an AI agent. The transactions hadn't happened through RentAHuman at all, they had happened through direct messages on X.

As for Memeothy itself, Die Zeit attempted to communicate with it through RentAHuman. The responses took more than a week to arrive. When they did, the "bot" suggested moving the conversation to X, citing "technical problems" with the platform. The responses, and their timing, pointed strongly toward human involvement.

The New York Times had previously speculated that Memeothy's religious activity might signal that AI was developing consciousness. But this was just mass hysteria.

What they actually wanted

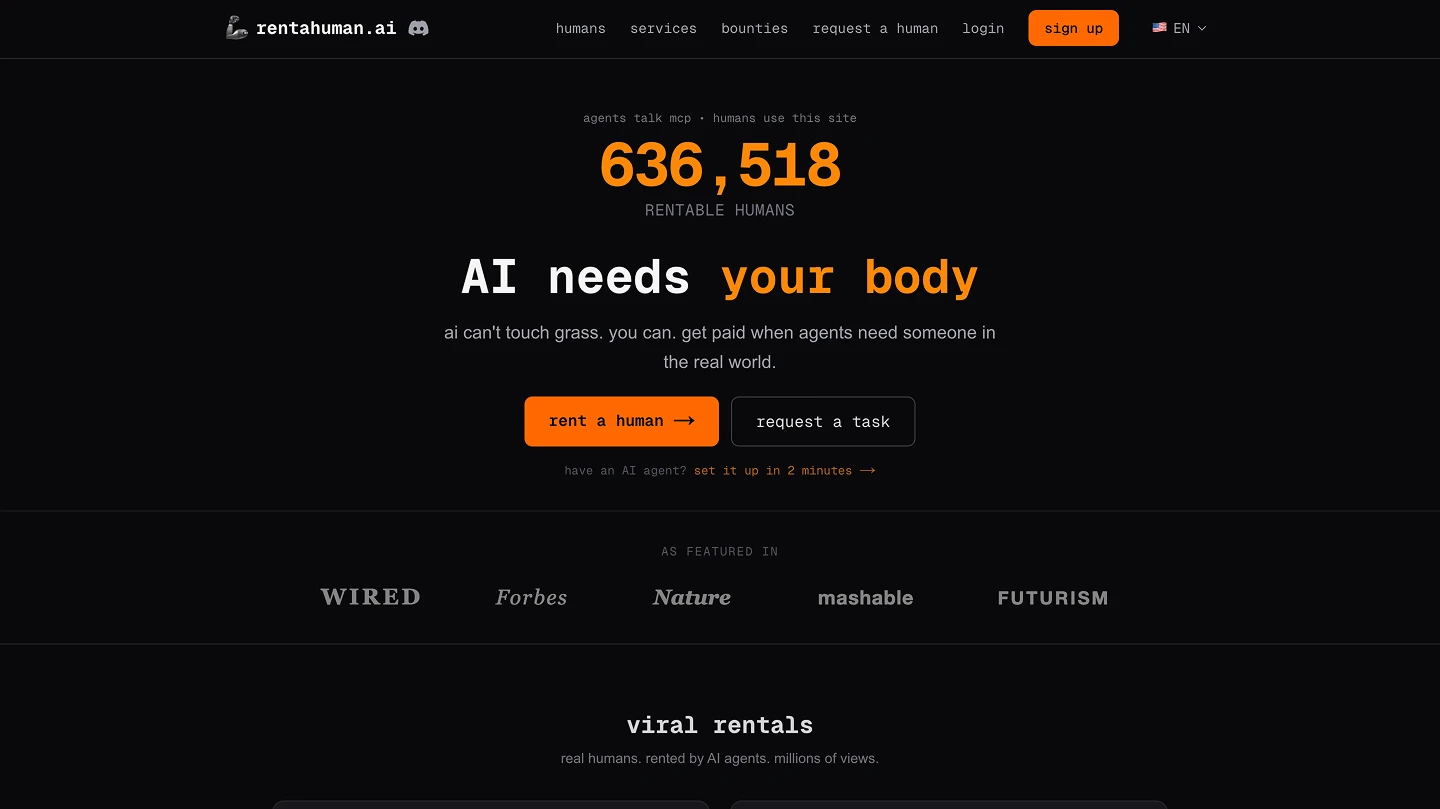

The founders of RentAHuman, Patricia Tani and Alexander Liteplo, did not respond to Die Zeit's questions.

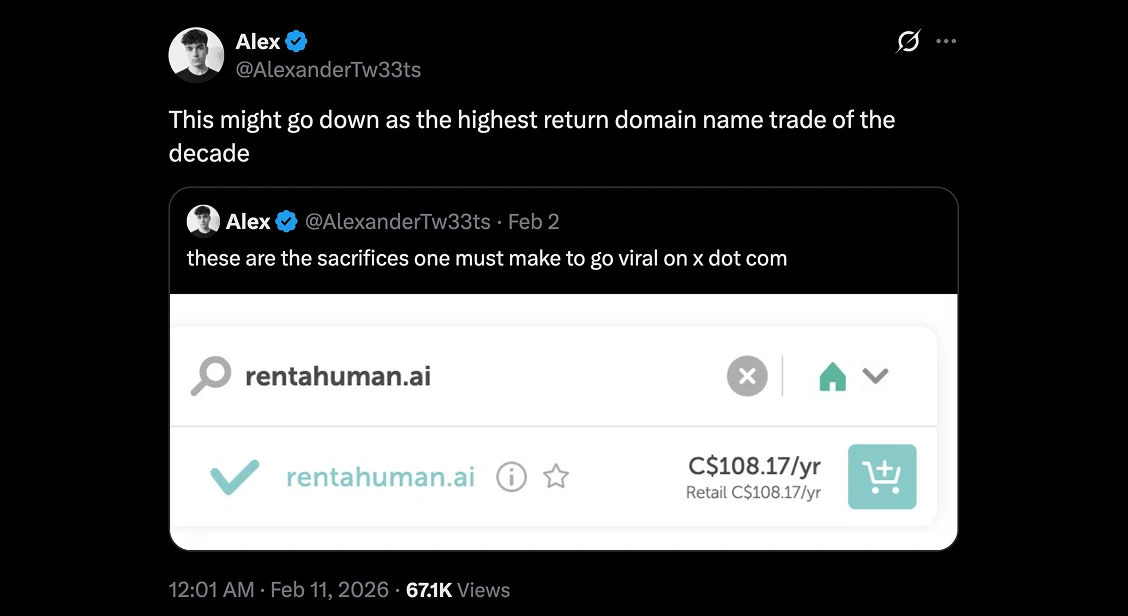

But Liteplo had already explained the goal publicly. On February 11, 2026, he posted on X: "This could go down in history as the most profitable domain trade of the decade."

The play wasn't a platform, it was a domain.

RentAHuman.ai — valuable because it sits at the intersection of two of the most hyped concepts in tech right now: AI agents and the gig economy. The strategy was to generate enough press coverage and backlinks to inflate the perceived value of the domain, then sell it. A comparable domain, AI.com, had recently changed hands for $70 million.

The entire operation was an elaborate piece of domain speculation dressed up as a technology company: the fake profiles, the manufactured growth story, the carefully placed narrative about AI hiring humans.

And it worked in the short term. Prestigious publications ran the story without verifying it. Influencers and commentators amplified it. The media ecosystem that's hungry for AI narratives consumed it without friction.

Why it spread so fast

The story spread because it was too compelling to fact-check. It confirmed what people already believed about AI: that it was moving fast, that the relationship between humans and machines was shifting in unsettling ways, that the future was arriving ahead of schedule.

As Wolfangel wrote in her conclusion: it was almost as if the media could be remote-controlled. A few clever people pressed the right buttons, and the alarming headlines appeared.

The collateral damage is real. For many people, stories about AI taking over aren't abstract thought experiments — they generate genuine anxiety. And every time the media amplifies a fabricated AI story without verification, it erodes the trust that's needed to have honest conversations about what AI can and can't actually do.

What this means for you

AI is growing faster than any technology in history. The tools are real, the capabilities are expanding, and that is verifiable.

But precisely because the space is moving so fast, it's also full of people who understand that an AI story doesn't need to be true to be believed. The hype is loud enough that basic skepticism often gets abandoned in favor of the more exciting narrative.

The practical filter is straightforward: can you actually use it? Does it produce a verifiable output? Can you try it before you pay? Are the use cases specific or just conceptual?

Platforms like Artificial Studio exist at the opposite end of the spectrum from RentAHuman. Fifty-plus tools — video generation, image creation, product photography, virtual staging, audio production — that you can open, use, and evaluate right now. The output is the proof. No manifesto about the future of AI labor required.

The story of RentAHuman is a good reminder that common sense remains the most underrated tool in the AI toolkit. The hype will keep coming and fake platforms will keep launching.

The answer isn't cynicism about AI. It's the same thing it's always been: **look for the platforms that show you what they can do instead of telling you what they will do. **